For instance, a basic property of sets is that disjointness from a given set is preserved by unions:Īpplying the dictionary in the reverse direction, one might now conjecture that if was independent of and was independent of, then should also be independent of, and furthermore thatīut these statements are well known to be false (for reasons related to pairwise independence of random variables being strictly weaker than joint independence). On the other hand, not every assertion about cardinalities of sets generalises to entropies of random variables that are not arising from restricting random boolean functions to sets. Which (together with non-negativity of conditional mutual information) implies the data processing inequality, and this identity is in turn easily established from the definition of mutual information.

Using the dictionary in the reverse direction, one is then led to conjecture the identity Firstly, if and are not necessarily disjoint outside of, then a consideration of Venn diagrams gives the more general inequalityĪnd a further inspection of the diagram then reveals the more precise identity

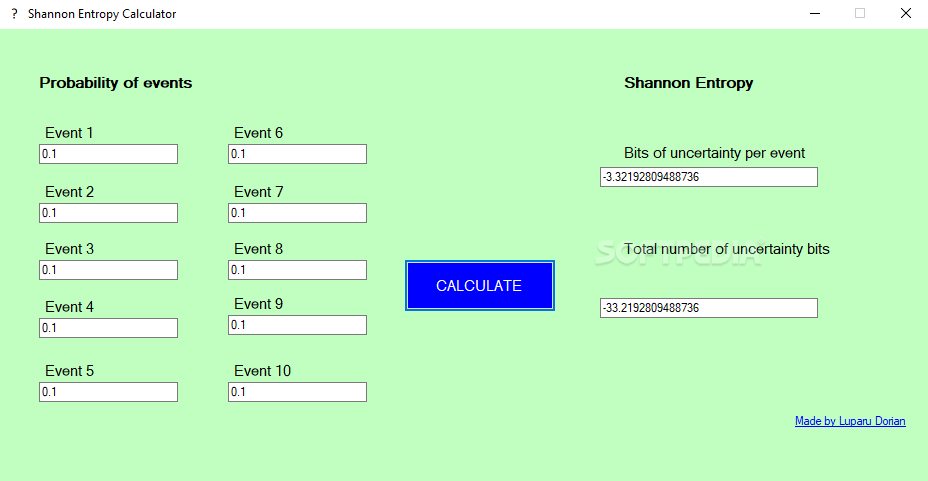

This dictionary also suggests how to prove the data processing inequality using the existing Shannon inequalities. Specialising to sets, this now says that if are disjoint outside of, then this can be made apparent by considering the corresponding Venn diagram. For instance, the Shannon inequality becomes the union bound, and the definition of mutual information becomes the inclusion-exclusion formulaįor a more advanced example, consider the data processing inequality that asserts that if are conditionally independent relative to, then. If one discards the normalisation factor, one then obtains the following dictionary between entropy and the combinatorics of finite sets: Random variablesĮvery (linear) inequality or identity about entropy (and related quantities, such as mutual information) then specialises to a combinatorial inequality or identity about finite sets that is easily verified. If is the restriction of to, and is the restriction of to, then the joint variable is equivalent to the restriction of to. In this case, has the law of a random uniformly distributed boolean function from to, and the entropy here can be easily computed to be, where denotes the cardinality of. One can get some initial intuition for these information-theoretic quantities by specialising to a simple situation in which all the random variables being considered come from restricting a single random (and uniformly distributed) boolean function on a given finite domain to some subset of : In a related vein, if and are equivalent in the sense that there are deterministic functional relationships, between the two variables, then is interchangeable with for the purposes of computing the above quantities, thus for instance, ,, , etc. At the other extreme, is a measure of the extent to which fails to depend on indeed, it is not difficult to show that if and only if is determined by in the sense that there is a deterministic function such that. Similarly, vanishes if and only if and are conditionally independent relative to. The mutual information is a measure of the extent to which and fail to be independent indeed, it is not difficult to show that vanishes if and only if and are independent. More generally, given three random variables, one can define the conditional mutual informationĪnd the final of the Shannon entropy inequalities asserts that this quantity is also non-negative. So we can define some further nonnegative quantities, the mutual information Given two random variables taking on finitely many values, the joint variable is also a random variable taking on finitely many values, and also has an entropy. (In some texts, one uses the logarithm to base rather than the natural logarithm, but the choice of base will not be relevant for this discussion.) This is clearly a nonnegative quantity. Given a random variable that takes on only finitely many values, we can define its Shannon entropy by the formula

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed